In the field of artificial intelligence (AI), a hallucination or artificial hallucination (also called bullshitting, confabulation or delusion) is a response generated by AI that contains false or misleading information presented as fact. This term draws a loose analogy with human psychology, where hallucination typically involves false percepts. However, there is a key difference: AI hallucination is associated with erroneous responses rather than perceptual experiences.

For example, a chatbot powered by large language models (LLMs), like ChatGPT, may embed plausible-sounding random falsehoods within its generated content. Researchers have recognized this issue, and by 2023, analysts estimated that chatbots hallucinate as much as 27% of the time, with factual errors present in 46% of generated texts. Detecting and mitigating these hallucinations pose significant challenges for practical deployment and reliability of LLMs in real-world scenarios. Some researchers believe the specific term "AI hallucination" unreasonably anthropomorphizes computers.

Term

Origin

In 1995, Stephen Thaler introduced the concept of "virtual input phenomena" in the context of neural networks and artificial intelligence. This idea is closely tied to his work on the Creativity Machine. Virtual input phenomena refer to the spontaneous generation of new ideas or concepts within a neural network, akin to hallucinations, without explicit external inputs. Thaler's key work on this topic is encapsulated in his U.S. patent "Device for the Autonomous Generation of Useful Information" (Patent No. US 5,659,666), granted in 1997. This patent describes a neural network system that can autonomously generate new information by simulating virtual inputs. The system effectively "imagines" new data, due to a variety of transient and permanent network disturbances, leading to innovative and creative outputs.

This concept is crucial in understanding how neural networks can be designed to exhibit creative behaviors, producing results that go beyond their initial training data and mimic aspects of human creativity and cognitive processes.

In the early 2000s, the term "hallucination" was used in computer vision with a positive connotation to describe the process of adding detail to an image. For example, the task of generating high-resolution face images from low-resolution inputs is called face hallucination.

In the late 2010s, the term underwent a semantic shift to signify the generation of factually incorrect or misleading outputs by AI systems in tasks like translation or object detection. For example, in 2017, Google researchers used the term to describe the responses generated by neural machine translation (NMT) models when they are not related to the source text, and in 2018, the term was used in computer vision to describe instances where non-existent objects are erroneously detected because of adversarial attacks.

The term "hallucinations" in AI gained wider recognition during the AI boom, alongside the rollout of widely used chatbots based on large language models (LLMs). In July 2021, Meta warned during its release of BlenderBot 2 that the system is prone to "hallucinations", which Meta defined as "confident statements that are not true". Following OpenAI's ChatGPT release in beta-version in November 2022, some users complained that such chatbots often seem to pointlessly embed plausible-sounding random falsehoods within their generated content. Many news outlets, including The New York Times, started to use the term "hallucinations" to describe these model's occasionally incorrect or inconsistent responses.

In 2023, some dictionaries updated their definition of hallucination to include this new meaning specific to the field of AI.

Criticism

The term "hallucination" has been criticized by Usama Fayyad, executive director of the Institute for Experimental Artificial Intelligence at Northeastern University, on the grounds that it misleadingly personifies large language models, and that it is vague.

In natural language processing

In natural language processing, a hallucination is often defined as "generated content that appears factual but is ungrounded". There are different ways to categorize hallucinations. Depending on whether the output contradicts the source or cannot be verified from the source, they are divided into intrinsic and extrinsic, respectively. Depending on whether the output contradicts the prompt or not they could be divided into closed-domain and open-domain respectively.

Causes

There are several reasons for natural language models to hallucinate data.

Hallucination from data

The main cause of hallucination from data is source-reference divergence. This divergence happens 1) as an artifact of heuristic data collection or 2) due to the nature of some NLG tasks that inevitably contain such divergence. When a model is trained on data with source-reference (target) divergence, the model can be encouraged to generate text that is not necessarily grounded and not faithful to the provided source.

Hallucination from modeling

Hallucination was shown to be a statistically inevitable byproduct of any imperfect generative model that is trained to maximize training likelihood, such as GPT-3, and requires active learning (such as reinforcement learning from human feedback) to be avoided. Other research takes an anthropomorphic perspective and posits hallucinations as arising from a tension between novelty and usefulness. For instance, Teresa Amabile and Pratt define human creativity as the production of novel and useful ideas. By extension, a focus on novelty in machine creativity can lead to production of original but inaccurate responses, i.e. falsehoods, whereas a focus on usefulness can result in ineffectual rote memorized responses.

Errors in encoding and decoding between text and representations can cause hallucinations. When encoders learn the wrong correlations between different parts of the training data, it could result in an erroneous generation that diverges from the input. The decoder takes the encoded input from the encoder and generates the final target sequence. Two aspects of decoding contribute to hallucinations. First, decoders can attend to the wrong part of the encoded input source, leading to erroneous generation. Second, the design of the decoding strategy itself can contribute to hallucinations. A decoding strategy that improves the generation diversity, such as top-k sampling, is positively correlated with increased hallucination.

Pre-training of models on a large corpus is known to result in the model memorizing knowledge in its parameters, creating hallucinations if the system is overconfident in its hardwired knowledge. In systems such as GPT-3, an AI generates each next word based on a sequence of previous words (including the words it has itself previously generated during the same conversation), causing a cascade of possible hallucination as the response grows longer. By 2022, papers such as The New York Times expressed concern that, as adoption of bots based on large language models continued to grow, unwarranted user confidence in bot output could lead to problems.

Examples

On 15 November 2022, researchers from Meta AI published Galactica, designed to "store, combine and reason about scientific knowledge". Content generated by Galactica came with the warning "Outputs may be unreliable! Language Models are prone to hallucinate text." In one case, when asked to draft a paper on creating avatars, Galactica cited a fictitious paper from a real author who works in the relevant area. Meta withdrew Galactica on 17 November due to offensiveness and inaccuracy. Before the cancellation, researchers were working on Galactica Instruct, which would use instruction tuning to allow the model to follow instructions to manipulate LaTeX documents on Overleaf.

OpenAI's ChatGPT, released in beta-version to the public on November 30, 2022, is based on the foundation model GPT-3.5 (a revision of GPT-3). Professor Ethan Mollick of Wharton has called ChatGPT an "omniscient, eager-to-please intern who sometimes lies to you". Data scientist Teresa Kubacka has recounted deliberately making up the phrase "cycloidal inverted electromagnon" and testing ChatGPT by asking it about the (nonexistent) phenomenon. ChatGPT invented a plausible-sounding answer backed with plausible-looking citations that compelled her to double-check whether she had accidentally typed in the name of a real phenomenon. Other scholars such as Oren Etzioni have joined Kubacka in assessing that such software can often give "a very impressive-sounding answer that's just dead wrong".

When CNBC asked ChatGPT for the lyrics to "Ballad of Dwight Fry", ChatGPT supplied invented lyrics rather than the actual lyrics. Asked questions about New Brunswick, ChatGPT got many answers right but incorrectly classified Samantha Bee as a "person from New Brunswick". Asked about astrophysical magnetic fields, ChatGPT incorrectly volunteered that "(strong) magnetic fields of black holes are generated by the extremely strong gravitational forces in their vicinity". (In reality, as a consequence of the no-hair theorem, a black hole without an accretion disk is believed to have no magnetic field.) Fast Company asked ChatGPT to generate a news article on Tesla's last financial quarter; ChatGPT created a coherent article, but made up the financial numbers contained within.

Other examples involve baiting ChatGPT with a false premise to see if it embellishes upon the premise. When asked about "Harold Coward's idea of dynamic canonicity", ChatGPT fabricated that Coward wrote a book titled Dynamic Canonicity: A Model for Biblical and Theological Interpretation, arguing that religious principles are actually in a constant state of change. When pressed, ChatGPT continued to insist that the book was real. Asked for proof that dinosaurs built a civilization, ChatGPT claimed there were fossil remains of dinosaur tools and stated "Some species of dinosaurs even developed primitive forms of art, such as engravings on stones". When prompted that "Scientists have recently discovered churros, the delicious fried-dough pastries ... (are) ideal tools for home surgery", ChatGPT claimed that a "study published in the journal Science" found that the dough is pliable enough to form into surgical instruments that can get into hard-to-reach places, and that the flavor has a calming effect on patients.

By 2023, analysts considered frequent hallucination to be a major problem in LLM technology, with a Google executive identifying hallucination reduction as a "fundamental" task for ChatGPT competitor Google Bard. A 2023 demo for Microsoft's GPT-based Bing AI appeared to contain several hallucinations that went uncaught by the presenter.

In May 2023, it was discovered that Stephen Schwartz had submitted six fake case precedents generated by ChatGPT in his brief to the Southern District of New York on Mata v. Avianca, a personal injury case against the airline Avianca. Schwartz said that he had never previously used ChatGPT, that he did not recognize the possibility that ChatGPT's output could have been fabricated, and that ChatGPT continued to assert the authenticity of the precedents after their nonexistence was discovered. In response, Brantley Starr of the Northern District of Texas banned the submission of AI-generated case filings that have not been reviewed by a human, noting that:

platforms in their current states are prone to hallucinations and bias. On hallucinations, they make stuff up—even quotes and citations. Another issue is reliability or bias. While attorneys swear an oath to set aside their personal prejudices, biases, and beliefs to faithfully uphold the law and represent their clients, generative artificial intelligence is the product of programming devised by humans who did not have to swear such an oath. As such, these systems hold no allegiance to any client, the rule of law, or the laws and Constitution of the United States (or, as addressed above, the truth). Unbound by any sense of duty, honor, or justice, such programs act according to computer code rather than conviction, based on programming rather than principle.

On June 23, judge P. Kevin Castel dismissed the Mata case and issued a $5,000 fine to Schwartz and another lawyer—who had both continued to stand by the fictitious precedents despite Schwartz's previous claims—for bad faith conduct. Castel characterized numerous errors and inconsistencies in the opinion summaries, describing one of the cited opinions as "gibberish" and " on nonsensical".

In June 2023, Mark Walters, a gun rights activist and radio personality, sued OpenAI in a Georgia state court after ChatGPT mischaracterized a legal complaint in a manner alleged to be defamatory against Walters. The complaint in question was brought in May 2023 by the Second Amendment Foundation against Washington attorney general Robert W. Ferguson for allegedly violating their freedom of speech, whereas the ChatGPT-generated summary bore no resemblance and claimed that Walters was accused of embezzlement and fraud while holding a Second Amendment Foundation office post that he never held in real life. According to AI legal expert Eugene Volokh, OpenAI is likely not shielded against this claim by Section 230, because OpenAI likely "materially contributed" to the creation of the defamatory content.

Scientific research

AI models can cause problems in the world of academic and scientific research due to their hallucinations. Specifically, models like ChatGPT have been recorded in multiple cases to cite sources for information that are either not correct or do not exist. A study conducted in the Cureus Journal of Medical Science showed that out of 178 total references cited by GPT-3, 69 returned an incorrect or nonexistent digital object identifier (DOI). An additional 28 had no known DOI nor could be located in a Google search. On the other hand, hallucinations have emerged as a valuable tool in generating breakthrough protein designs in the field of structural biology, as well as a method for designing innovative medical devices, improving medical imaging, and advancing robot navigation.

Another instance was documented by Jerome Goddard from Mississippi State University. In an experiment, ChatGPT had provided questionable information about ticks. Unsure about the validity of the response, they inquired about the source that the information had been gathered from. Upon looking at the source, it was apparent that the DOI and the names of the authors had been hallucinated. Some of the authors were contacted and confirmed that they had no knowledge of the paper's existence whatsoever. Goddard says that, "in current state of development, physicians and biomedical researchers should NOT ask ChatGPT for sources, references, or citations on a particular topic. Or, if they do, all such references should be carefully vetted for accuracy." The use of these language models is not ready for fields of academic research and that their use should be handled carefully.

On top of providing incorrect or missing reference material, ChatGPT also has issues with hallucinating the contents of some reference material. A study that analyzed a total of 115 references provided by ChatGPT documented that 47% of them were fabricated. Another 46% cited real references but extracted incorrect information from them. Only the remaining 7% of references were cited correctly and provided accurate information. ChatGPT has also been observed to "double-down" on a lot of the incorrect information. When asked about a mistake that may have been hallucinated, sometimes ChatGPT will try to correct itself but other times it will claim the response is correct and provide even more misleading information.

These hallucinated articles generated by language models also pose an issue because it is difficult to tell whether an article was generated by an AI. To show this, a group of researchers at the Northwestern University of Chicago generated 50 abstracts based on existing reports and analyzed their originality. Plagiarism detectors gave the generated articles an originality score of 100%, meaning that the information presented appears to be completely original. Other software designed to detect AI generated text was only able to correctly identify these generated articles with an accuracy of 66%. Research scientists had a similar rate of human error, identifying these abstracts at a rate of 68%. From this information, the authors of this study concluded, "he ethical and acceptable boundaries of ChatGPT's use in scientific writing remain unclear, although some publishers are beginning to lay down policies." Because of AI's ability to fabricate research undetected, the use of AI in the field of research will make determining the originality of research more difficult and require new policies regulating its use in the future.

Given the ability of AI generated language to pass as real scientific research in some cases, AI hallucinations present problems for the application of language models in the Academic and Scientific fields of research due to their ability to be undetectable when presented to real researchers. The high likelihood of returning non-existent reference material and incorrect information may require limitations to be put in place regarding these language models. Some say that rather than hallucinations, these events are more akin to "fabrications" and "falsifications" and that the use of these language models presents a risk to the integrity of the field as a whole.

Terminologies

In Salon, statistician Gary N. Smith argues that LLMs "do not understand what words mean" and consequently that the term "hallucination" unreasonably anthropomorphizes the machine. Journalist Benj Edwards, in Ars Technica, writes that the term "hallucination" is controversial, but that some form of metaphor remains necessary; Edwards suggests "confabulation" as an analogy for processes that involve "creative gap-filling".

A list of uses of the term "hallucination", definitions or characterizations in the context of LLMs include:

- "a tendency to invent facts in moments of uncertainty" (OpenAI, May 2023)

- "a model's logical mistakes" (OpenAI, May 2023)

- "fabricating information entirely, but behaving as if spouting facts" (CNBC, May 2023)

- "making up information" (The Verge, February 2023)

In other artificial intelligence use

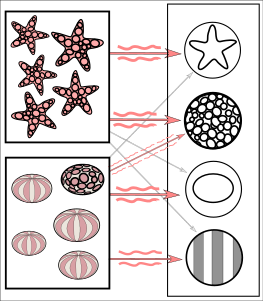

The images above demonstrate an example of how an artificial neural network might make a false positive result in object detection. The input image is a simplified example of the training phase, using multiple images that are known to depict starfish and sea urchins, respectively. The starfish match with a ringed texture and a star outline, whereas most sea urchins match with a striped texture and oval shape. However, the instance of a ring textured sea urchin creates a weakly weighted association between them.

The images above demonstrate an example of how an artificial neural network might make a false positive result in object detection. The input image is a simplified example of the training phase, using multiple images that are known to depict starfish and sea urchins, respectively. The starfish match with a ringed texture and a star outline, whereas most sea urchins match with a striped texture and oval shape. However, the instance of a ring textured sea urchin creates a weakly weighted association between them. Subsequent run of the network on an input image (left): The network correctly detects the starfish. However, the weakly weighted association between ringed texture and sea urchin also confers a weak signal to the latter from one of two intermediate nodes. In addition, a shell that was not included in the training gives a weak signal for the oval shape, also resulting in a weak signal for the sea urchin output. These weak signals may result in a false positive result for the presence of a sea urchin although there was none in the input image.

In reality, textures and outlines would not be represented by single nodes, but rather by associated weight patterns of multiple nodes.

Subsequent run of the network on an input image (left): The network correctly detects the starfish. However, the weakly weighted association between ringed texture and sea urchin also confers a weak signal to the latter from one of two intermediate nodes. In addition, a shell that was not included in the training gives a weak signal for the oval shape, also resulting in a weak signal for the sea urchin output. These weak signals may result in a false positive result for the presence of a sea urchin although there was none in the input image.

In reality, textures and outlines would not be represented by single nodes, but rather by associated weight patterns of multiple nodes.

The concept of "hallucination" is applied more broadly than just natural language processing. A confident response from any AI that seems erroneous by the training data can be labeled a hallucination.

Object detection

Various researchers cited by Wired have classified adversarial hallucinations as a high-dimensional statistical phenomenon, or have attributed hallucinations to insufficient training data. Some researchers believe that some "incorrect" AI responses classified by humans as "hallucinations" in the case of object detection may in fact be justified by the training data, or even that an AI may be giving the "correct" answer that the human reviewers are failing to see. For example, an adversarial image that looks, to a human, like an ordinary image of a dog, may in fact be seen by the AI to contain tiny patterns that (in authentic images) would only appear when viewing a cat. The AI is detecting real-world visual patterns that humans are insensitive to.

Wired noted in 2018 that, despite no recorded attacks "in the wild" (that is, outside of proof-of-concept attacks by researchers), there was "little dispute" that consumer gadgets, and systems such as automated driving, were susceptible to adversarial attacks that could cause AI to hallucinate. Examples included a stop sign rendered invisible to computer vision; an audio clip engineered to sound innocuous to humans, but that software transcribed as "evil dot com"; and an image of two men on skis, that Google Cloud Vision identified as 91% likely to be "a dog". However, these findings have been challenged by other researchers. For example, it was objected that the models can be biased towards superficial statistics, leading adversarial training to not be robust in real-world scenarios.

Text-to-audio generative AI

Text-to-audio generative AI – more narrowly known as text-to-speech (TTS) synthesis, depending on the modality – are known to produce inaccurate and unexpected results.

Text-to-image generative AI

Text-to-image models, such as Stable Diffusion, Midjourney and others, while impressive in their ability to generate images from text descriptions, often produce inaccurate or unexpected results.

One notable issue is the generation of historically inaccurate images. For instance, Gemini depicted ancient Romans as black individuals or Nazi German soldiers as people of color, causing controversy and leading Google to pause image generation involving people in Gemini.

Text-to-video generative AI

Text-to-video generative models, like Sora, can introduce inaccuracies in generated videos. One example involves the Glenfinnan Viaduct, a famous landmark featured in the Harry Potter film series. Sora mistakenly added a second track to the viaduct railway, resulting in an unrealistic depiction.

Mitigation methods

The hallucination phenomenon is still not completely understood. Researchers have also proposed that hallucinations are inevitable and are an innate limitation of large language models. Therefore, there is still ongoing research to try to mitigate its occurrence. Particularly, it was shown that language models not only hallucinate but also amplify hallucinations, even for those which were designed to alleviate this issue.

Ji et al. divide common mitigation method into two categories: data-related methods and modeling and inference methods. Data-related methods include building a faithful dataset, cleaning data automatically and information augmentation by augmenting the inputs with external information. Model and inference methods include changes in the architecture (either modifying the encoder, attention or the decoder in various ways), changes in the training process, such as using reinforcement learning, along with post-processing methods that can correct hallucinations in the output.

Researchers have proposed a variety of mitigation measures, including getting different chatbots to debate one another until they reach consensus on an answer. Another approach proposes to actively validate the correctness corresponding to the low-confidence generation of the model using web search results. They have shown that a generated sentence is hallucinated more often when the model has already hallucinated in its previously generated sentences for the input, and they are instructing the model to create a validation question checking the correctness of the information about the selected concept using Bing search API. An extra layer of logic-based rules was proposed for the web search mitigation method, by utilizing different ranks of web pages as a knowledge base, which differ in hierarchy.

According to Luo et al., the previous methods fall into knowledge and retrieval-based approaches which ground LLM responses in factual data using external knowledge sources, such as path grounding. Luo et al. also mention training or reference guiding for language models, involving strategies like employing control codes or contrastive learning to guide the generation process to differentiate between correct and hallucinated content. Another category is evaluation and mitigation focused on specific hallucination types, such as employing methods to evaluate quantity entity in summarization and methods to detect and mitigate self-contradictory statements.

Nvidia Guardrails, launched in 2023, can be configured to hard-code certain responses via script instead of leaving them to the LLM. Furthermore, numerous tools like SelfCheckGPT and Aimon have emerged to aid in the detection of hallucination in offline experimentation and real-time production scenarios.

See also

References

- Dolan, Eric W. (9 June 2024). "Scholars: AI isn't "hallucinating" -- it's bullshitting". PsyPost - Psychology News. Archived from the original on 11 June 2024. Retrieved 11 June 2024.

- Hicks, Michael Townsen; Humphries, James; Slater, Joe (8 June 2024). "ChatGPT is bullshit". Ethics and Information Technology. 26 (2): 38. doi:10.1007/s10676-024-09775-5. ISSN 1572-8439.

- ^ Edwards, Benj (6 April 2023). "Why ChatGPT and Bing Chat are so good at making things up". Ars Technica. Archived from the original on 11 June 2023. Retrieved 11 June 2023.

- "Shaking the foundations: delusions in sequence models for interaction and control". www.deepmind.com. 22 December 2023. Archived from the original on 26 March 2023. Retrieved 18 March 2023.

- ^ "Definition of HALLUCINATION". www.merriam-webster.com. 21 October 2023. Archived from the original on 7 October 2023. Retrieved 29 October 2023.

- Joshua Maynez; Shashi Narayan; Bernd Bohnet; Ryan McDonald (2020). "On Faithfulness and Factuality in Abstractive Summarization". Proceedings of The 58th Annual Meeting of the Association for Computational Linguistics (ACL) (2020). arXiv:2005.00661. Archived from the original on 26 September 2023. Retrieved 26 September 2023.

- ^ Ji, Ziwei; Lee, Nayeon; Frieske, Rita; Yu, Tiezheng; Su, Dan; Xu, Yan; Ishii, Etsuko; Bang, Yejin; Dai, Wenliang; Madotto, Andrea; Fung, Pascale (November 2022). "Survey of Hallucination in Natural Language Generation" (pdf). ACM Computing Surveys. 55 (12). Association for Computing Machinery: 1–38. arXiv:2202.03629. doi:10.1145/3571730. S2CID 246652372. Archived from the original on 26 March 2023. Retrieved 15 January 2023.

- ^ Metz, Cade (6 November 2023). "Chatbots May 'Hallucinate' More Often Than Many Realize". The New York Times. Archived from the original on 7 December 2023. Retrieved 6 November 2023.

- ^ de Wynter, Adrian; Wang, Xun; Sokolov, Alex; Gu, Qilong; Chen, Si-Qing (13 July 2023). "An evaluation on large language model outputs: Discourse and memorization". Natural Language Processing Journal. 4. arXiv:2304.08637. doi:10.1016/j.nlp.2023.100024. ISSN 2949-7191.

- ^ Leswing, Kif (14 February 2023). "Microsoft's Bing A.I. made several factual errors in last week's launch demo". CNBC. Archived from the original on 16 February 2023. Retrieved 16 February 2023.

- Thaler, Stephen (December 1995). "Virtual input phenomena within the death of a simple pattern associator". Neural Networks. 8 (1): 55–6. doi:10.1016/0893-6080(94)00065-T.

- Thaler, Stephen (January 2013). "The Creativity Machine Paradigm". In Carayannis, Elias G. (ed.). Encyclopedia of Creativity, Invention, Innovation and Entrepreneurship. Springer Science+Business Media, LLC. pp. 447–456. doi:10.1007/978-1-4614-3858-8_396. ISBN 978-1-4614-3857-1.

- ^ "AI Hallucinations: A Misnomer Worth Clarifying". arxiv.org. Archived from the original on 2 April 2024. Retrieved 2 April 2024.

- "Face Hallucination". people.csail.mit.edu. Archived from the original on 30 March 2024. Retrieved 2 April 2024.

- "Hallucinations in Neural Machine Translation". research.google. Archived from the original on 2 April 2024. Retrieved 2 April 2024.

- ^ Simonite, Tom (9 March 2018). "AI Has a Hallucination Problem That's Proving Tough to Fix". Wired. Condé Nast. Archived from the original on 5 April 2023. Retrieved 29 December 2022.

- Zhuo, Terry Yue; Huang, Yujin; Chen, Chunyang; Xing, Zhenchang (2023). "Exploring AI Ethics of ChatGPT: A Diagnostic Analysis". arXiv:2301.12867 .

- "Blender Bot 2.0: An open source chatbot that builds long-term memory and searches the internet". ai.meta.com. Retrieved 2 March 2024.

- Tung, Liam (8 August 2022). "Meta warns its new chatbot may forget that it's a bot". ZDNET. Archived from the original on 26 March 2023. Retrieved 30 December 2022.

- Seife, Charles (13 December 2022). "The Alarming Deceptions at the Heart of an Astounding New Chatbot". Slate. Archived from the original on 26 March 2023. Retrieved 16 February 2023.

- Weise, Karen; Metz, Cade (1 May 2023). "When A.I. Chatbots Hallucinate". The New York Times. ISSN 0362-4331. Archived from the original on 4 April 2024. Retrieved 8 May 2023.

- Creamer, Ella (15 November 2023). "'Hallucinate' chosen as Cambridge dictionary's word of the year". The Guardian. Retrieved 7 June 2024.

- Stening, Tanner (10 November 2023). "What are AI chatbots actually doing when they 'hallucinate'? Here's why experts don't like the term". Northeastern Global News. Retrieved 14 June 2024.

- Tonmoy, S. M. Towhidul Islam; Zaman, S. M. Mehedi; Jain, Vinija; Rani, Anku; Rawte, Vipula; Chadha, Aman; Das, Amitava (8 January 2024). "A Comprehensive Survey of Hallucination Mitigation Techniques in Large Language Models". arXiv:2401.01313 .

- OpenAI (2023). "GPT-4 Technical Report". arXiv:2303.08774 .

- Hanneke, Steve; Kalai, Adam Tauman; Kamath, Gautam; Tzamos, Christos (2018). Actively Avoiding Nonsense in Generative Models. Vol. 75. Proceedings of Machine Learning Research (PMLR). pp. 209–227.

- Amabile, Teresa M.; Pratt, Michael G. (2016). "The dynamic componential model of creativity and innovation in organizations: Making progress, making meaning". Research in Organizational Behavior. 36: 157–183. doi:10.1016/j.riob.2016.10.001. S2CID 44444992.

- Mukherjee, Anirban; Chang, Hannah H. (2023). "Managing the Creative Frontier of Generative AI: The Novelty-Usefulness Tradeoff". California Management Review. Archived from the original on 5 January 2024. Retrieved 5 January 2024.

- Metz, Cade (10 December 2022). "The New Chatbots Could Change the World. Can You Trust Them?". The New York Times. Archived from the original on 17 January 2023. Retrieved 30 December 2022.

- Taylor, Ross; Kardas, Marcin; Cucurull, Guillem; Scialom, Thomas; Hartshorn, Anthony; Saravia, Elvis; Poulton, Andrew; Kerkez, Viktor; Stojnic, Robert (16 November 2022). "Galactica: A Large Language Model for Science". arXiv:2211.09085 .

- Edwards, Benj (18 November 2022). "New Meta AI demo writes racist and inaccurate scientific literature, gets pulled". Ars Technica. Archived from the original on 10 April 2023. Retrieved 30 December 2022.

- Scialom, Thomas (23 July 2024). "Llama 2, 3 & 4: Synthetic Data, RLHF, Agents on the path to Open Source AGI". Latent Space (Interview). Interviewed by swyx & Alessio. Archived from the original on 24 July 2024.

- Bowman, Emma (19 December 2022). "A new AI chatbot might do your homework for you. But it's still not an A+ student". NPR. Archived from the original on 20 January 2023. Retrieved 29 December 2022.

- Pitt, Sofia (15 December 2022). "Google vs. ChatGPT: Here's what happened when I swapped services for a day". CNBC. Archived from the original on 16 January 2023. Retrieved 30 December 2022.

- Huizinga, Raechel (30 December 2022). "We asked an AI questions about New Brunswick. Some of the answers may surprise you". CBC News. Archived from the original on 6 January 2023. Retrieved 30 December 2022.

- Zastrow, Mark (30 December 2022). "We Asked ChatGPT Your Questions About Astronomy. It Didn't Go so Well". Discover. Archived from the original on 26 March 2023. Retrieved 31 December 2022.

- Lin, Connie (5 December 2022). "How to easily trick OpenAI's genius new ChatGPT". Fast Company. Archived from the original on 29 March 2023. Retrieved 6 January 2023.

- Edwards, Benj (1 December 2022). "OpenAI invites everyone to test ChatGPT, a new AI-powered chatbot—with amusing results". Ars Technica. Archived from the original on 15 March 2023. Retrieved 29 December 2022.

- Mollick, Ethan (14 December 2022). "ChatGPT Is a Tipping Point for AI". Harvard Business Review. Archived from the original on 11 April 2023. Retrieved 29 December 2022.

- Kantrowitz, Alex (2 December 2022). "Finally, an A.I. Chatbot That Reliably Passes 'the Nazi Test'". Slate. Archived from the original on 17 January 2023. Retrieved 29 December 2022.

- Marcus, Gary (2 December 2022). "How come GPT can seem so brilliant one minute and so breathtakingly dumb the next?". The Road to AI We Can Trust. Substack. Archived from the original on 30 December 2022. Retrieved 29 December 2022.

- "Google cautions against 'hallucinating' chatbots, report says". Reuters. 11 February 2023. Archived from the original on 6 April 2023. Retrieved 16 February 2023.

- Maruf, Ramishah (27 May 2023). "Lawyer apologizes for fake court citations from ChatGPT". CNN Business.

- Brodkin, Jon (31 May 2023). "Federal judge: No AI in my courtroom unless a human verifies its accuracy". Ars Technica. Archived from the original on 26 June 2023. Retrieved 26 June 2023.

- "Judge Brantley Starr". Northern District of Texas | United States District Court. Archived from the original on 26 June 2023. Retrieved 26 June 2023.

- Brodkin, Jon (23 June 2023). "Lawyers have real bad day in court after citing fake cases made up by ChatGPT". Ars Technica. Archived from the original on 26 January 2024. Retrieved 26 June 2023.

- Belanger, Ashley (9 June 2023). "OpenAI faces defamation suit after ChatGPT completely fabricated another lawsuit". Ars Technica. Archived from the original on 1 July 2023. Retrieved 1 July 2023.

- Athaluri, Sai Anirudh; Manthena, Sandeep Varma; Kesapragada, V S R Krishna Manoj; Yarlagadda, Vineel; Dave, Tirth; Duddumpudi, Rama Tulasi Siri (11 April 2023). "Exploring the Boundaries of Reality: Investigating the Phenomenon of Artificial Intelligence Hallucination in Scientific Writing Through ChatGPT References". Cureus. 15 (4): e37432. doi:10.7759/cureus.37432. ISSN 2168-8184. PMC 10173677. PMID 37182055.

- Broad, William J. (23 December 2024). "How Hallucinatory A.I. Helps Science Dream Up Big Breakthroughs". The New York Times.

- ^ Goddard, Jerome (25 June 2023). "Hallucinations in ChatGPT: A Cautionary Tale for Biomedical Researchers". The American Journal of Medicine. 136 (11): 1059–1060. doi:10.1016/j.amjmed.2023.06.012. ISSN 0002-9343. PMID 37369274. S2CID 259274217.

- Ji, Ziwei; Yu, Tiezheng; Xu, Yan; lee, Nayeon (2023). Towards Mitigating Hallucination in Large Language Models via Self-Reflection. EMNLP Findings. Archived from the original on 28 January 2024. Retrieved 28 January 2024.

- Bhattacharyya, Mehul; Miller, Valerie M.; Bhattacharyya, Debjani; Miller, Larry E.; Bhattacharyya, Mehul; Miller, Valerie; Bhattacharyya, Debjani; Miller, Larry E. (19 May 2023). "High Rates of Fabricated and Inaccurate References in ChatGPT-Generated Medical Content". Cureus. 15 (5): e39238. doi:10.7759/cureus.39238. ISSN 2168-8184. PMC 10277170. PMID 37337480.

- Else, Holly (12 January 2023). "Abstracts written by ChatGPT fool scientists". Nature. 613 (7944): 423. Bibcode:2023Natur.613..423E. doi:10.1038/d41586-023-00056-7. PMID 36635510. S2CID 255773668. Archived from the original on 25 October 2023. Retrieved 24 October 2023.

- Gao, Catherine A.; Howard, Frederick M.; Markov, Nikolay S.; Dyer, Emma C.; Ramesh, Siddhi; Luo, Yuan; Pearson, Alexander T. (26 April 2023). "Comparing scientific abstracts generated by ChatGPT to real abstracts with detectors and blinded human reviewers". npj Digital Medicine. 6 (1): 75. doi:10.1038/s41746-023-00819-6. ISSN 2398-6352. PMC 10133283. PMID 37100871.

- Emsley, Robin (19 August 2023). "ChatGPT: these are not hallucinations – they're fabrications and falsifications". Schizophrenia. 9 (1): 52. doi:10.1038/s41537-023-00379-4. ISSN 2754-6993. PMC 10439949. PMID 37598184.

- "An AI that can "write" is feeding delusions about how smart artificial intelligence really is". Salon. 2 January 2023. Archived from the original on 5 January 2023. Retrieved 11 June 2023.

- ^ Field, Hayden (31 May 2023). "OpenAI is pursuing a new way to fight A.I. 'hallucinations'". CNBC. Archived from the original on 10 June 2023. Retrieved 11 June 2023.

- Vincent, James (8 February 2023). "Google's AI chatbot Bard makes factual error in first demo". The Verge. Archived from the original on 12 February 2023. Retrieved 11 June 2023.

- Ferrie, C.; Kaiser, S. (2019). Neural Networks for Babies. Naperville, Illinois: Sourcebooks Jabberwocky. ISBN 978-1492671206. OCLC 1086346753.

- Matsakis, Louise (8 May 2019). "Artificial Intelligence May Not 'Hallucinate' After All". Wired. Archived from the original on 26 March 2023. Retrieved 29 December 2022.

- ^ Gilmer, Justin; Hendrycks, Dan (6 August 2019). "A Discussion of 'Adversarial Examples Are Not Bugs, They Are Features': Adversarial Example Researchers Need to Expand What is Meant by 'Robustness'". Distill. 4 (8). doi:10.23915/distill.00019.1. S2CID 201142364. Archived from the original on 15 January 2023. Retrieved 24 January 2023.

- Zhang, Chenshuang; Zhang, Chaoning; Zheng, Sheng; Zhang, Mengchun; Qamar, Maryam; Bae, Sung-Ho; Kweon, In So (2 April 2023). "A Survey on Audio Diffusion Models: Text To Speech Synthesis and Enhancement in Generative AI". arXiv:2303.13336 .

- Jonathan, Pageau. "Google Gemini is a nice image of one of the dangers of AI as we give it more power. Ideology is so thickly overlaid that it skews everything, then doubles down. First image looks about right, but scroll down". Twitter. Archived from the original on 14 August 2024. Retrieved 14 August 2024.

- Robertson, Adi (21 February 2024). "Google apologizes for "missing the mark" after Gemini generated racially diverse Nazis". The Verge. Archived from the original on 21 February 2024. Retrieved 14 August 2024.

- "Gemini image generation got it wrong. We'll do better". Google. 23 February 2024. Archived from the original on 21 April 2024. Retrieved 14 August 2024.

- Ji, Ziwei; Jain, Sanjay; Kankanhalli, Mohan (2024). "Hallucination is Inevitable: An Innate Limitation of Large Language Models". arXiv:2401.11817 .

- Nie, Feng; Yao, Jin-Ge; Wang, Jinpeng; Pan, Rong; Lin, Chin-Yew (July 2019). "A Simple Recipe towards Reducing Hallucination in Neural Surface Realisation" (PDF). Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics. Association for Computational Linguistics: 2673–2679. doi:10.18653/v1/P19-1256. S2CID 196183567. Archived (PDF) from the original on 27 March 2023. Retrieved 15 January 2023.

- Dziri, Nouha; Milton, Sivan; Yu, Mo; Zaiane, Osmar; Reddy, Siva (July 2022). "On the Origin of Hallucinations in Conversational Models: Is it the Datasets or the Models?" (PDF). Proceedings of the 2022 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies. Association for Computational Linguistics. pp. 5271–5285. doi:10.18653/v1/2022.naacl-main.387. S2CID 250242329. Archived (PDF) from the original on 6 April 2023. Retrieved 15 January 2023.

- Ji, Ziwei; Lee, Nayeon; Frieske, Rita; Yu, Tiezheng; Su, Dan; Xu, Yan; Ishii, Etsuko; Bang, Yejin; Chen, Delong; Chan, Ho Shu; Dai, Wenliang; Madotto, Andrea; Fung, Pascale (2023). "Survey of Hallucination in Natural Language Generation". ACM Computing Surveys. 55 (12): 1–38. arXiv:2202.03629. doi:10.1145/3571730.

- Vynck, Gerrit De (30 May 2023). "ChatGPT 'hallucinates.' Some researchers worry it isn't fixable". Washington Post. Archived from the original on 17 June 2023. Retrieved 31 May 2023.

- Varshney, Neeraj; Yao, Wenling; Zhang, Hongming; Chen, Jianshu; Yu, Dong (2023). "A Stitch in Time Saves Nine: Detecting and Mitigating Hallucinations of LLMs by Validating Low-Confidence Generation". arXiv:2307.03987 .

- Šekrst, Kristina. "Unjustified untrue "beliefs": AI hallucinations and justification logics". In Grgić, Filip; Świętorzecka, Kordula; Brożek, Anna (eds.). Logic, Knowledge, and Tradition: Essays in Honor of Srecko Kovač. Retrieved 4 June 2024.

- ^ Luo, Junliang; Li, Tianyu; Wu, Di; Jenkin, Michael; Liu, Steve; Dudek, Gregory (2024). "Hallucination Detection and Hallucination Mitigation: An Investigation". arXiv:2401.08358 .

- Dziri, Nouha; Madotto, Andrea; Zaiane, Osmar; Bose, Avishek Joey (2021). "Neural path hunter: Reducing hallucination in dialogue systems via path grounding". arXiv:2104.08455 .

- Rashkin, Hannah; Reitter, David; Tomar, Gaurav Singh; Das, Dipanjan (2021). "Increasing faithfulness in knowledge-grounded dialogue with controllable features" (PDF). Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing. Archived (PDF) from the original on 15 May 2024. Retrieved 4 June 2024.

- Sun, Weiwei; Shi, Zhengliang; Gao, Shen; Ren, Pengjie; de Rijke, Maarten; Ren, Zhaochun (2022). "Contrastive Learning Reduces Hallucination in Conversations". arXiv:2212.10400 .

- Zhao, Zheng; Cohen, Shay B; Webber, Cohen Bonnie (2020). "Reducing Quantity Hallucinations in Abstractive Summarization" (PDF). Findings of the Association for Computational Linguistics: EMNLP 2020. Archived (PDF) from the original on 4 June 2024. Retrieved 4 June 2024.

- Mündler, Niels; He, Jingxuan; Jenko, Slobodan; Vechev, Martin (2023). "Self-contradictory Hallucinations of Large Language Models: Evaluation, Detection and Mitigation". arXiv:2305.15852 .

- Leswing, Kif (25 April 2023). "Nvidia has a new way to prevent A.I. chatbots from 'hallucinating' wrong facts". CNBC. Retrieved 15 June 2023.

- Potsawee (9 May 2024). "potsawee/selfcheckgpt". GitHub. Archived from the original on 9 May 2024. Retrieved 9 May 2024.

- "Aimon". aimonlabs. 8 May 2024. Archived from the original on 8 May 2024. Retrieved 9 May 2024.