In intelligence and artificial intelligence, an intelligent agent (IA) is an agent that perceives its environment, takes actions autonomously in order to achieve goals, and may improve its performance with learning or acquiring knowledge.

An intelligent agent may be simple or complex: A thermostat or other control system is considered an example of an intelligent agent, as is a human being, as is any system that meets the definition, such as a firm, a state, or a biome.

Leading AI textbooks define "artificial intelligence" as the "study and design of intelligent agents", a definition that considers goal-directed behavior to be the essence of intelligence. Goal-directed agents are also described using a term borrowed from economics, "rational agent".

An agent has an "objective function" that encapsulates all the IA's goals. Such an agent is designed to create and execute whatever plan will, upon completion, maximize the expected value of the objective function.

For example, a reinforcement learning agent has a "reward function" that allows the programmers to shape the IA's desired behavior, and an evolutionary algorithm's behavior is shaped by a "fitness function".

Intelligent agents in artificial intelligence are closely related to agents in economics, and versions of the intelligent agent paradigm are studied in cognitive science, ethics, and the philosophy of practical reason, as well as in many interdisciplinary socio-cognitive modeling and computer social simulations.

Intelligent agents are often described schematically as an abstract functional system similar to a computer program.

Abstract descriptions of intelligent agents are called abstract intelligent agents (AIA) to distinguish them from their real-world implementations.

An autonomous intelligent agent is designed to function in the absence of human intervention. Intelligent agents are also closely related to software agents. An autonomous computer program that carries out tasks on behalf of users.

As a definition of artificial intelligence

Artificial Intelligence: A Modern Approach defines an "agent" as

"Anything that can be viewed as perceiving its environment through sensors and acting upon that environment through actuators"

It defines a "rational agent" as:

"An agent that acts so as to maximize the expected value of a performance measure based on past experience and knowledge."

It also defines the field of "artificial intelligence research" as:

"The study and design of rational agents"

Padgham & Winikoff (2005) agree that an intelligent agent is situated in an environment and responds in a timely (though not necessarily real-time) manner to changes in the environment. However, intelligent agents must also proactively pursue goals in a flexible and robust way. Optional desiderata include that the agent be rational, and that the agent be capable of belief-desire-intention analysis.

Kaplan and Haenlein define artificial intelligence as "a system's ability to correctly interpret external data, to learn from such data, and to use those learnings to achieve specific goals and tasks through flexible adaptation". This definition is closely related to that of an intelligent agent.

Advantages

| This section possibly contains original research. Relevant discussion may be found on Talk:Intelligent agent. Please improve it by verifying the claims made and adding inline citations. Statements consisting only of original research should be removed. (February 2023) (Learn how and when to remove this message) |

Philosophically, this definition of artificial intelligence avoids several lines of criticism. Unlike the Turing test, it does not refer to human intelligence in any way. Thus, there is no need to discuss if it is "real" vs "simulated" intelligence (i.e., "synthetic" vs "artificial" intelligence) and does not indicate that such a machine has a mind, consciousness or true understanding. It seems not imply John Searle's "strong AI hypothesis". It also doesn't attempt to draw a sharp dividing line between behaviors that are "intelligent" and behaviors that are "unintelligent"—programs need only be measured in terms of their objective function.

More importantly, it has a number of practical advantages that have helped move AI research forward. It provides a reliable and scientific way to test programs; researchers can directly compare or even combine different approaches to isolated problems, by asking which agent is best at maximizing a given "goal function".

It also gives them a common language to communicate with other fields—such as mathematical optimization (which is defined in terms of "goals") or economics (which uses the same definition of a "rational agent").

Objective function

Further information: utility function (economics) and loss function (mathematics)An agent that is assigned an explicit "goal function" is considered more intelligent if it consistently takes actions that successfully maximize its programmed goal function.

The goal can be simple: 1 if the IA wins a game of Go, 0 otherwise.

Or the goal can be complex: Perform actions mathematically similar to ones that succeeded in the past.

The "goal function" encapsulates all of the goals the agent is driven to act on; in the case of rational agents, the function also encapsulates the acceptable trade-offs between accomplishing conflicting goals.

Terminology varies. For example, some agents seek to maximize or minimize an "utility function", "objective function" or "loss function".

Goals can be explicitly defined or induced. If the AI is programmed for "reinforcement learning", it has a "reward function" that encourages some types of behavior and punishes others.

Alternatively, an evolutionary system can induce goals by using a "fitness function" to mutate and preferentially replicate high-scoring AI systems, similar to how animals evolved to innately desire certain goals such as finding food.

Some AI systems, such as nearest-neighbor, instead of reason by analogy, these systems are not generally given goals, except to the degree that goals are implicit in their training data. Such systems can still be benchmarked if the non-goal system is framed as a system whose "goal" is to accomplish its narrow classification task.

Systems that are not traditionally considered agents, such as knowledge-representation systems, are sometimes subsumed into the paradigm by framing them as agents that have a goal of (for example) answering questions as accurately as possible; the concept of an "action" is here extended to encompass the "act" of giving an answer to a question. As an additional extension, mimicry-driven systems can be framed as agents who are optimizing a "goal function" based on how closely the IA succeeds in mimicking the desired behavior. In the generative adversarial networks of the 2010s, an "encoder"/"generator" component attempts to mimic and improvise human text composition. The generator is attempting to maximize a function encapsulating how well it can fool an antagonistic "predictor"/"discriminator" component.

While symbolic AI systems often accept an explicit goal function, the paradigm can also be applied to neural networks and to evolutionary computing. Reinforcement learning can generate intelligent agents that appear to act in ways intended to maximize a "reward function". Sometimes, rather than setting the reward function to be directly equal to the desired benchmark evaluation function, machine learning programmers will use reward shaping to initially give the machine rewards for incremental progress in learning. Yann LeCun stated in 2018, "Most of the learning algorithms that people have come up with essentially consist of minimizing some objective function." AlphaZero chess had a simple objective function; each win counted as +1 point, and each loss counted as -1 point. An objective function for a self-driving car would have to be more complicated. Evolutionary computing can evolve intelligent agents that appear to act in ways intended to maximize a "fitness function" that influences how many descendants each agent is allowed to leave.

The mathematical formalism of AIXI was proposed as a maximally intelligent agent in this paradigm. However, AIXI is uncomputable. In the real world, an IA is constrained by finite time and hardware resources, and scientists compete to produce algorithms that can achieve progressively higher scores on benchmark tests with existing hardware.

Agent function

A simple agent program can be defined mathematically as a function f (called the "agent function") which maps every possible percepts sequence to a possible action the agent can perform or to a coefficient, feedback element, function or constant that affects eventual actions:

Agent function is an abstract concept as it could incorporate various principles of decision making like calculation of utility of individual options, deduction over logic rules, fuzzy logic, etc.

The program agent, instead, maps every possible percept to an action.

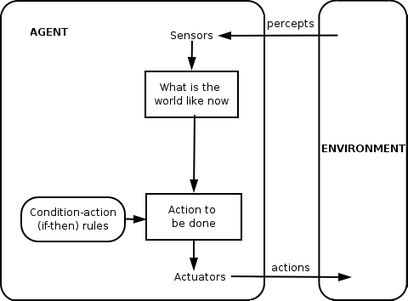

We use the term percept to refer to the agent's perceptional inputs at any given instant. In the following figures, an agent is anything that can be viewed as perceiving its environment through sensors and acting upon that environment through actuators.

Classes of intelligent agents

Russell and Norvig's classification

Russell & Norvig (2003) group agents into five classes based on their degree of perceived intelligence and capability:

Simple reflex agents

Simple reflex agents act only on the basis of the current percept, ignoring the rest of the percept history. The agent function is based on the condition-action rule: "if condition, then action".

This agent function only succeeds when the environment is fully observable. Some reflex agents can also contain information on their current state which allows them to disregard conditions whose actuators are already triggered.

Infinite loops are often unavoidable for simple reflex agents operating in partially observable environments. If the agent can randomize its actions, it may be possible to escape from infinite loops.

Model-based reflex agents

A model-based agent can handle partially observable environments. Its current state is stored inside the agent maintaining some kind of structure that describes the part of the world which cannot be seen. This knowledge about "how the world works" is called a model of the world, hence the name "model-based agent".

A model-based reflex agent should maintain some sort of internal model that depends on the percept history and thereby reflects at least some of the unobserved aspects of the current state. Percept history and impact of action on the environment can be determined by using the internal model. It then chooses an action in the same way as reflex agent.

An agent may also use models to describe and predict the behaviors of other agents in the environment.

Goal-based agents

Goal-based agents further expand on the capabilities of the model-based agents, by using "goal" information. Goal information describes situations that are desirable. This provides the agent a way to choose among multiple possibilities, selecting the one which reaches a goal state. Search and planning are the subfields of artificial intelligence devoted to finding action sequences that achieve the agent's goals.

Utility-based agents

Goal-based agents only distinguish between goal states and non-goal states. It is also possible to define a measure of how desirable a particular state is. This measure can be obtained through the use of a utility function which maps a state to a measure of the utility of the state. A more general performance measure should allow a comparison of different world states according to how well they satisfied the agent's goals. The term utility can be used to describe how "happy" the agent is.

A rational utility-based agent chooses the action that maximizes the expected utility of the action outcomes - that is, what the agent expects to derive, on average, given the probabilities and utilities of each outcome. A utility-based agent has to model and keep track of its environment, tasks that have involved a great deal of research on perception, representation, reasoning, and learning.

Learning agents

Learning has the advantage of allowing agents to initially operate in unknown environments and become more competent than their initial knowledge alone might allow. The most important distinction is between the "learning element", responsible for making improvements, and the "performance element", responsible for selecting external actions.

The learning element uses feedback from the "critic" on how the agent is doing and determines how the performance element, or "actor", should be modified to do better in the future. The performance element, previously considered the entire agent, takes in percepts and decides on actions.

The last component of the learning agent is the "problem generator". It is responsible for suggesting actions that will lead to new and informative experiences.

Weiss's classification

Weiss (2013) defines four classes of agents:

- Logic-based agents – in which the decision about what action to perform is made via logical deduction.

- Reactive agents – in which decision making is implemented in some form of direct mapping from situation to action.

- Belief-desire-intention agents – in which decision making depends upon the manipulation of data structures representing the beliefs, desires, and intentions of the agent; and finally,

- Layered architectures – in which decision-making is realized via various software layers, each of which is more or less explicitly reasoning about the environment at different levels of abstraction

Other

In 2013, Alexander Wissner-Gross published a theory pertaining to Freedom and Intelligence for intelligent agents.

Hierarchies of agents

Main article: Multi-agent systemIntelligent agents can be organized hierarchically into multiple "sub-agents". Intelligent sub-agents process and perform lower-level functions. Taken together, the intelligent agent and sub-agents create a complete system that can accomplish difficult tasks or goals with behaviors and responses that display a form of intelligence.

Generally, an agent can be constructed by separating the body into the sensors and actuators, and so that it operates with a complex perception system that takes the description of the world as input for a controller and outputs commands to the actuator. However, a hierarchy of controller layers is often necessary to balance the immediate reaction desired for low-level tasks and the slow reasoning about complex, high-level goals.

Alternative definitions and uses

"Intelligent agent" is also often used as a vague term, sometimes synonymous with "virtual personal assistant". Some 20th-century definitions characterize an agent as a program that aids a user or that acts on behalf of a user. These examples are known as software agents, and sometimes an "intelligent software agent" (that is, a software agent with intelligence) is referred to as an "intelligent agent".

According to Nikola Kasabov, IA systems should exhibit the following characteristics:

- Accommodate new problem solving rules incrementally

- Adapt online and in real time

- Are able to analyze themselves in terms of behavior, error and success.

- Learn and improve through interaction with the environment (embodiment)

- Learn quickly from large amounts of data

- Have memory-based exemplar storage and retrieval capacities

- Have parameters to represent short- and long-term memory, age, forgetting, etc.

Applications

| This section may lend undue weight to certain ideas, incidents, or controversies. Please help to create a more balanced presentation. Discuss and resolve this issue before removing this message. (September 2023) |

Hallerbach et al. discussed the application of agent-based approaches for the development and validation of automated driving systems via a digital twin of the vehicle-under-test and microscopic traffic simulation based on independent agents. Waymo has created a multi-agent simulation environment, Carcraft, to test algorithms for self-driving cars. It simulates traffic interactions between human drivers, pedestrians and automated vehicles. People's behavior is imitated by artificial agents based on data of real human behavior. The basic idea of using agent-based modeling to understand self-driving cars was discussed as early as 2003.

See also

- Ambient intelligence

- Artificial conversational entity

- Artificial intelligence systems integration

- Autonomous agent

- Cognitive architectures

- Cognitive radio – a practical field for implementation

- Cybernetics

- DAYDREAMER

- Embodied agent

- Federated search – the ability for agents to search heterogeneous data sources using a single vocabulary

- Friendly artificial intelligence

- Fuzzy agents – IA implemented with adaptive fuzzy logic

- GOAL agent programming language

- Hybrid intelligent system

- Intelligent control

- Intelligent system

- JACK Intelligent Agents

- Multi-agent system and multiple-agent system – multiple interactive agents

- Reinforcement learning

- Semantic Web – making data on the Web available for automated processing by agents

- Social simulation

- Software agent

- Software bot

Notes

- The Padgham & Winikoff definition explicitly covers only social agents that interact with other agents.

Inline references

- ^ Russell & Norvig 2003, chpt. 2.

- ^ Bringsjord, Selmer; Govindarajulu, Naveen Sundar (12 July 2018). "Artificial Intelligence". In Edward N. Zalta (ed.). The Stanford Encyclopedia of Philosophy (Summer 2020 Edition).

- Wolchover, Natalie (30 January 2020). "Artificial Intelligence Will Do What We Ask. That's a Problem". Quanta Magazine. Retrieved 21 June 2020.

- ^ Bull, Larry (1999). "On model-based evolutionary computation". Soft Computing. 3 (2): 76–82. doi:10.1007/s005000050055. S2CID 9699920.

- Russell & Norvig 2003, pp. 4–5, 32, 35, 36 and 56.

- ^ Russell & Norvig (2003)

- Lin Padgham and Michael Winikoff. Developing intelligent agent systems: A practical guide. Vol. 13. John Wiley & Sons, 2005.

- Kaplan, Andreas; Haenlein, Michael (1 January 2019). "Siri, Siri, in my hand: Who's the fairest in the land? On the interpretations, illustrations, and implications of artificial intelligence". Business Horizons. 62 (1): 15–25. doi:10.1016/j.bushor.2018.08.004. S2CID 158433736.

- Russell & Norvig 2003, p. 27.

- Domingos 2015, Chapter 5.

- Domingos 2015, Chapter 7.

- Lindenbaum, M., Markovitch, S., & Rusakov, D. (2004). Selective sampling for nearest neighbor classifiers. Machine learning, 54(2), 125–152.

- "Generative adversarial networks: What GANs are and how they've evolved". VentureBeat. 26 December 2019. Retrieved 18 June 2020.

- Wolchover, Natalie (January 2020). "Artificial Intelligence Will Do What We Ask. That's a Problem". Quanta Magazine. Retrieved 18 June 2020.

- Andrew Y. Ng, Daishi Harada, and Stuart Russell. "Policy invariance under reward transformations: Theory and application to reward shaping." In ICML, vol. 99, pp. 278-287. 1999.

- Martin Ford. Architects of Intelligence: The truth about AI from the people building it. Packt Publishing Ltd, 2018.

- "Why AlphaZero's Artificial Intelligence Has Trouble With the Real World". Quanta Magazine. 2018. Retrieved 18 June 2020.

- Adams, Sam; Arel, Itmar; Bach, Joscha; Coop, Robert; Furlan, Rod; Goertzel, Ben; Hall, J. Storrs; Samsonovich, Alexei; Scheutz, Matthias; Schlesinger, Matthew; Shapiro, Stuart C.; Sowa, John (15 March 2012). "Mapping the Landscape of Human-Level Artificial General Intelligence". AI Magazine. 33 (1): 25. doi:10.1609/aimag.v33i1.2322.

- Hutson, Matthew (27 May 2020). "Eye-catching advances in some AI fields are not real". Science | AAAS. Retrieved 18 June 2020.

- Russell & Norvig 2003, p. 33

- Salamon, Tomas (2011). Design of Agent-Based Models. Repin: Bruckner Publishing. pp. 42–59. ISBN 978-80-904661-1-1.

- Nilsson, Nils J. (April 1996). "Artificial intelligence: A modern approach". Artificial Intelligence. 82 (1–2): 369–380. doi:10.1016/0004-3702(96)00007-0. ISSN 0004-3702.

- Russell & Norvig 2003, pp. 46–54

- Stefano Albrecht and Peter Stone (2018). Autonomous Agents Modelling Other Agents: A Comprehensive Survey and Open Problems. Artificial Intelligence, Vol. 258, pp. 66-95. https://doi.org/10.1016/j.artint.2018.01.002

- Box, Geeks out of the (2019-12-04). "A Universal Formula for Intelligence". Geeks out of the box. Retrieved 2022-10-11.

- Wissner-Gross, A. D.; Freer, C. E. (2013-04-19). "Causal Entropic Forces". Physical Review Letters. 110 (16): 168702. Bibcode:2013PhRvL.110p8702W. doi:10.1103/PhysRevLett.110.168702. hdl:1721.1/79750. PMID 23679649.

- Poole, David; Mackworth, Alan. "1.3 Agents Situated in Environments‣ Chapter 2 Agent Architectures and Hierarchical Control‣ Artificial Intelligence: Foundations of Computational Agents, 2nd Edition". artint.info. Retrieved 28 November 2018.

- Fingar, Peter (2018). "Competing For The Future With Intelligent Agents... And A Confession". Forbes Sites. Retrieved 18 June 2020.

- Burgin, Mark, and Gordana Dodig-Crnkovic. "A systematic approach to artificial agents." arXiv preprint arXiv:0902.3513 (2009).

- Kasabov 1998.

- Hallerbach, S.; Xia, Y.; Eberle, U.; Koester, F. (2018). "Simulation-Based Identification of Critical Scenarios for Cooperative and Automated Vehicles". SAE International Journal of Connected and Automated Vehicles. 1 (2). SAE International: 93. doi:10.4271/2018-01-1066.

- Madrigal, Story by Alexis C. "Inside Waymo's Secret World for Training Self-Driving Cars". The Atlantic. Retrieved 14 August 2020.

- Connors, J.; Graham, S.; Mailloux, L. (2018). "Cyber Synthetic Modeling for Vehicle-to-Vehicle Applications". In International Conference on Cyber Warfare and Security. Academic Conferences International Limited: 594-XI.

- Yang, Guoqing; Wu, Zhaohui; Li, Xiumei; Chen, Wei (2003). "SVE: embedded agent based smart vehicle environment". Proceedings of the 2003 IEEE International Conference on Intelligent Transportation Systems. Vol. 2. pp. 1745–1749. doi:10.1109/ITSC.2003.1252782. ISBN 0-7803-8125-4. S2CID 110177067.

Other references

- Domingos, Pedro (September 22, 2015). The Master Algorithm: How the Quest for the Ultimate Learning Machine Will Remake Our World. Basic Books. ISBN 978-0465065707.

- Russell, Stuart J.; Norvig, Peter (2003). Artificial Intelligence: A Modern Approach (2nd ed.). Upper Saddle River, New Jersey: Prentice Hall. Chapter 2. ISBN 0-13-790395-2.

- Kasabov, N. (1998). "Introduction: Hybrid intelligent adaptive systems". International Journal of Intelligent Systems. 13 (6): 453–454. doi:10.1002/(SICI)1098-111X(199806)13:6<453::AID-INT1>3.0.CO;2-K. S2CID 120318478.

- Weiss, G. (2013). Multiagent systems (2nd ed.). Cambridge, MA: MIT Press. ISBN 978-0-262-01889-0.